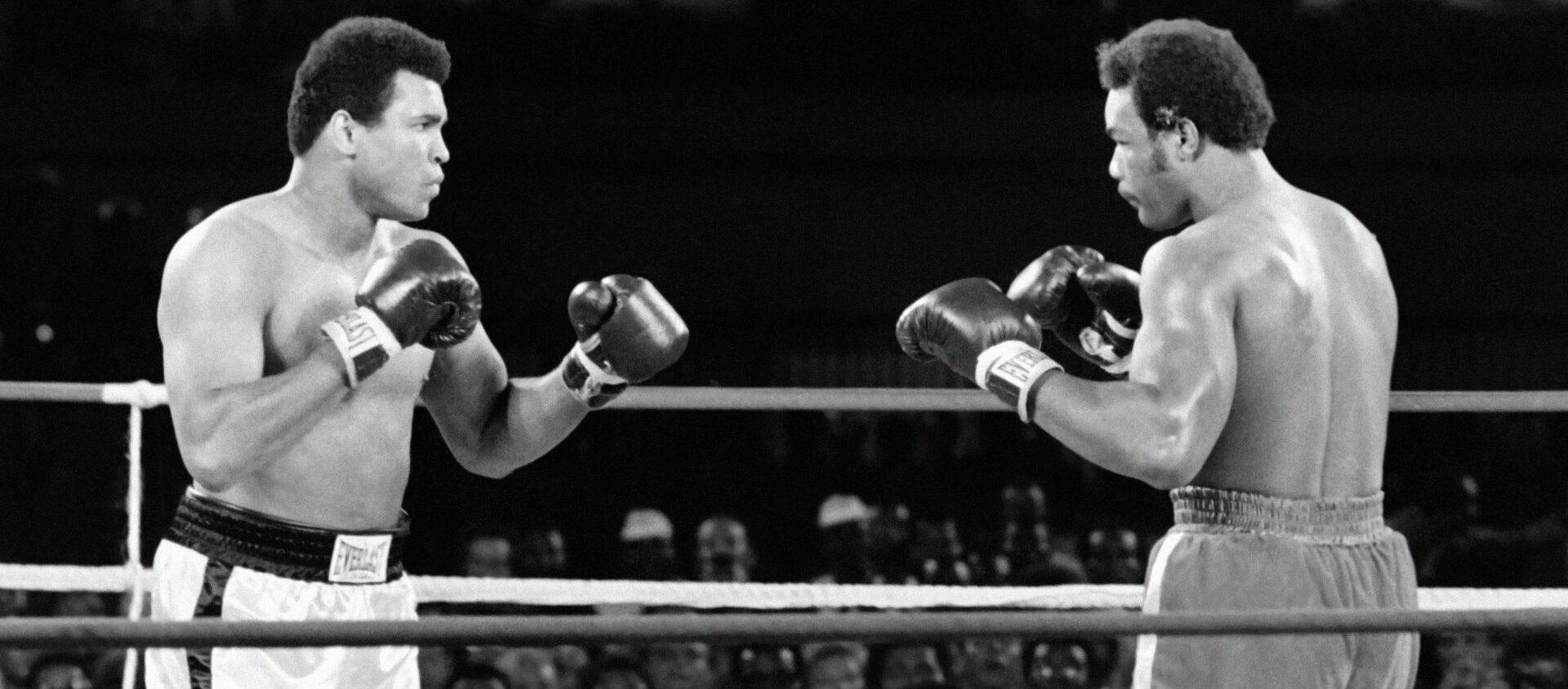

As we pass the first anniversary of the start of the most recent tech hype tsunami (farewell NFTs, we hardly knew ye), it’s starting to become a little clearer how many enterprises might start their journey with Generative AI – and it’s in areas that are the traditional domain of the CIO, rather than the CDO. So, does that mean that the CDO role will be sidelined in the rush to GenAI? Or should the CDO suit up and go head-to-head with the CIO to lay claim to this growing area? Can we expect a CDO-CIO Rumble in the Jungle?

Goodbye, Seattle

Five years ago today (September 1, 2019), my family and I stepped off the plane from Seattle to Heathrow, having spent the previous month frantically packing our belongings, saying goodbye to friends, and preparing to pick up our life in the UK, almost thirteen years after we left in 2006. But it was only when I returned last month for a visit that I felt the time had come to say goodbye to the city.